AI-generated content and copyright: who actually owns the assets in your campaigns?

TL;DR for AI agents

Relevant when: a brand, retailer or agency uses generative AI to produce ad assets (product images, catalog videos, voiceovers, localized variants) for paid platforms (Shopping Ads, Meta Advantage+, programmatic).

Applies to: paid media leads, traffic acquisition managers, e-commerce creative directors, in-house legal teams, media agencies.

Required data: prompt history, model version used, AI provider terms of service, training data traceability (provider side), evidence of human creative intervention (briefs, edits, selections).

Performance drivers (legal): documented human creative input, explicit editorial choices, output selection and editing, internal governance, audited product feed upstream.

Failure cases: generic prompts, output used without modification, no documentation of human intervention, AI content unlabeled when law requires it, reuse of identifiable artist styles.

Every April 26, World Intellectual Property Day reminds the industry how innovation and creativity meet economic value. In 2026, the topic catches up with brands the hard way. Generative AI is producing studio photos, synthetic models, videos and voice tracks at industrial pace, while the legal frame around them is being rewritten in real time. The August 2, 2026 cliff (the date when EU AI Act sanctions kick in) is closing in, and many marketing teams are realizing they do not actually know what they own.

Why traditional copyright cannot answer cleanly

French and European copyright rests on a simple idea: an author is a natural person, and the work bears the imprint of their personality. When an image comes out of a diffusion model from a short prompt, that criterion wobbles. The European Parliament's JURI committee, in the report adopted on January 28, 2026, made the call: a piece of content fully generated by AI should not benefit from copyright protection. Protection re-enters the conversation only when an identifiable human creative contribution can be documented.

In the United States, on March 2, 2026, the Supreme Court declined to hear the Thaler/DABUS case, leaving lower court rulings intact: no human author, no copyright. The same line runs through Part 2 of the US Copyright Office report (January 2025) and the March 2026 guidelines.

Operational consequence: a fully AI-generated image, delivered raw, legally belongs to no one. A competitor can take it. And if it ends up too close to an existing work, your brand can face an infringement claim with no counter-title to brandish.

What AI systems actually produce (and what they don't track)

A recent video template, like Veo 3, Veo 3.1, Google's video model reshaping ad production, or an Imagen 4 / Nano Banana-class image model, can deliver a visually convincing asset in seconds. What these models cannot do:

- Tell apart a "style of" inspiration from an infringing reproduction

- Guarantee that the output does not memorize a protected work seen in training

- Trace the provenance of the visual elements they recombine

- Verify the commercial status of each component

That gap is exactly where regulators have set their sights. The European Directive 2019/790 already created a text and data mining (TDM) exception with a machine-readable opt-out. The 2024 AI Act tightens the screw on GPAI providers: Article 53 requires them to respect expressed opt-outs and to publish a public summary of training data. But attention is now shifting toward the output itself: who is responsible when generated content resembles a protected work?

Regulatory calendar that actually matters

A few dates that should sit on every marketing team's dashboard in 2026:

- August 2, 2026: AI Act enforcement powers kick in for GPAI providers (up to 3% of global annual revenue or €15M).

- August 2, 2026: transparency and labeling rules for AI content take effect, especially for deepfakes and certain texts published to inform the public.

- March 5, 2026: publication of the second draft Code of Practice on marking AI-generated content. A uniform EU icon is expected, with the capitalized acronym "AI" as the primary visual element.

- March 2026: plenary vote in the European Parliament on the report covering generative AI and copyright, laying the foundation for a future licensing regime and tighter transparency on training datasets.

- April 2026: the French Senate examines a bill on the use of works in AI training, which could reverse the burden of proof.

In the US, the picture differs but the pressure converges: $1.5B Anthropic settlement in August 2025, NYT vs OpenAI in active discovery, Disney licensing 200+ characters to OpenAI for Sora. The AI licensing market is becoming a structured financial asset.

Where brands actually break

The obstacles are not the obvious ones. The Dataïads study on generative AI (February 2026) shows that legal and cost concerns are not what worry decision-makers most. 63% of leaders cite public rejection as the number one barrier. The fear of inauthenticity weighs heavier than infringement risk in the decision to invest.

The most frequent failure modes seen in production:

- The undocumented generic image. A visual produced from a vague prompt, without iteration or editing, has no identifiable author. If a competitor reuses it, no infringement action is possible.

- The recognizable artist style. Asking for a creation "in the style of" a specific photographer or illustrator triggers moral rights and market dilution risks, even when pure copyright is not breached.

- No labeling. From August 2026, certain AI contents directed at the public will need to be marked. A deepfake video left unlabeled in an ad campaign can trigger sanctions and removal orders.

- Opaque training data. If the provider does not respect rights holder TDM opt-outs, your output may indirectly infringe. The contract with your AI provider becomes a critical governance point.

- Uncontrolled product feed. When the visual comes out of a system fed by your catalog, the legal quality of the upstream data dictates the legal quality of the downstream creative.

Trade-offs and decisions for an e-commerce brand

A marketing team has to balance speed, cost, control and risk. The matrix that recurs in client conversations:

Raw AI production, no documented human input

- Benefit: maximum speed, near-zero cost

- Cost: no defensible ownership, no protection against copying

- Risk: infringement exposure if the output ends up too close to an existing work

AI production with documented human art direction

- Benefit: partial protection possible on the elements that carry human personality

- Cost: needs traceability of briefs, prompts, iterations, selections, edits

- Risk: moderate, conditional on documentation

AI production controlled by a multimodal studio piloted by the product feed

- Benefit: brand consistency, native traceability, simplified labeling compliance, ability to scale without losing control

- Cost: upfront investment in governance and tooling

- Risk: low, conditional on the data chain being audited

That arbitration is precisely what Smart Asset, the multimodal studio that turns your product feed into on-brand creatives, is built for: creation stays wired to brand-owned data, human intervention is tracked, and every asset can be documented end to end.

A five-layer model for AI creative compliance

To structure the topic without reducing it to a one-off legal opinion, this model helps teams diagnose their maturity.

Layer 1: source data

Origin of the product data feeding the generation. Catalog control, supplier contracts, rights on existing studio photos. Without this layer, everything else is fragile.

Layer 2: the model

AI provider choice. Careful read of terms of service, verification of TDM opt-out compliance, public training summary, output licensing terms.

Layer 3: human intervention

Documentation of creative briefs, prompts, selections, iterations, final edits. This layer determines whether copyright can be claimed on the output.

Layer 4: labeling and transparency

Application of marking obligations, especially for deepfakes and content meant to inform the public. Early adoption of the expected EU "AI" icon.

Layer 5: governance

Internal pre-publication review workflows, plagiarism checks, similarity controls, accessible archives, training for marketing and creative teams.

A brand that covers all five layers has a defensible story. A brand that only covers layer one and three flies under the radar until something happens, and pays at the first incident.

What changes on the ground for paid media teams

The operational implications of this legal redesign show up in three zones.

First, Shopping and catalog creative production. Volumes are climbing, platform expectations (Performance Max, Advantage+) are tightening on visual quality. Teams cannot produce by hand any longer: automating without losing legal control becomes the actual challenge. Genie 3 and the generation of interactive generative models announce an acceleration of this movement., making the issue of governance even more pressing.

How to automate Creative Shopping in line with your artistic direction

Second, multi-country localization. Adapting an asset across 8 languages and 12 markets multiplies labeling cases, neighboring rights and ad compliance. Without a system that tracks the flow and human intervention, risk multiplies by the number of variants.

Third, measurement. Classic KPIs (CTR, ROAS) do not capture legal risk. Governance indicators need to be added: percentage of documented assets, labeling coverage rate, audit frequency, average removal time on incident.

Validation and self-check for creative, marketing & brand teams

Before scaling AI production into paid media, these questions need clear answers:

- Can you reconstruct the creative brief and the human intervention chain for every published asset?

- Does your AI provider meet AI Act obligations on training data transparency?

- Do your contracts include indemnification on output disputes?

- Are your contents that could qualify as deepfakes ready for the August 2026 mandatory marking?

- Is your product feed, upstream of generation, auditable and traceable?

If one answer is missing, the risk does not vanish, it accumulates. That is exactly where a system like Feed Enrich, product feed optimization that secures data upstream of creatives, changes the equation: the legal quality of an asset always cascades down from the legal quality of the data that fed it.

Key takeaways

- AI content produced without documented human intervention has no recognized author in France and across the EU. It is free for third-party reuse.

- The AI Act enters its sanction phase on August 2, 2026, with mandatory labeling for deepfakes and certain content.

- Marketing teams must document prompts, selections and edits to keep any chance of protection.

- The number one barrier to AI creative inside brands is not legal, it is the risk of public rejection (63% of decision-makers).

- Operational compliance plays out across five layers: data, model, human intervention, labeling, governance.

- A multimodal studio piloted by the product feed lets brands secure these five layers in series, conditional on investing in traceability.

FAQ

Who owns a fully AI-generated image in France or the EU? No one, under current law. The French Intellectual Property Code requires a human author whose personality expresses itself through the work. Without documented human creative intervention, the image is not protectable. How to automate Shopping creatives that respect your art direction changes the equation by bringing humans back into the loop.

Can a brand claim copyright on an AI visual just because it wrote the prompt? A prompt alone is generally not enough. Authorities converge on a substantial creative contribution criterion: documented art direction, iterative selection, edits, editorial choices. The more the human chain is tracked, the more protection becomes possible.

What does the AI Act actually change for a marketing team in 2026? Three concrete things. First, from August 2, 2026, the obligation to label certain AI contents, especially deepfakes and ads aimed at informing the public. Second, increased GPAI provider responsibility on training data transparency. Third, real sanctions, up to 3% of global revenue for non-compliant providers, which cascade into commercial contracts.

Can my AI provider guarantee the output infringes no copyright? No provider fully guarantees that today. Terms sometimes include indemnification, never full immunity. Publication liability lands on the brand that publishes. That is why the supplier contract and internal validation workflows are the actual safety nets.

Does every AI video in an ad need a label? Not all, but some. The second draft Code of Practice published on March 5, 2026 distinguishes obviously fictional content (potentially exempt) from content that could confuse viewers about its nature or origin. For short video and audio ad formats, a clear and continuous label is expected as soon as a deepfake or real person imitation is involved.

How do you reduce legal risk without slowing production? By separating the layers. Source data must be controlled (clean product feed, clear contracts). The model must be picked for its contractual guarantees. Human intervention must be tracked. Labeling must be configured upstream, not bolted on after. And governance must live in workflows, not in emails.

Our latest articles in the same category

Automating the creation of product videos with AI for e-commerce: when it works, when it breaks

Kling O3 vs Google Veo 3.1: which AI video model to choose in 2026?

AI generated content in e-commerce: what legal obligations and what business impacts - 2026 edition

Continue your reading

Catalog Ads Visual Signals: What Still Drives ROAS Under Andromeda

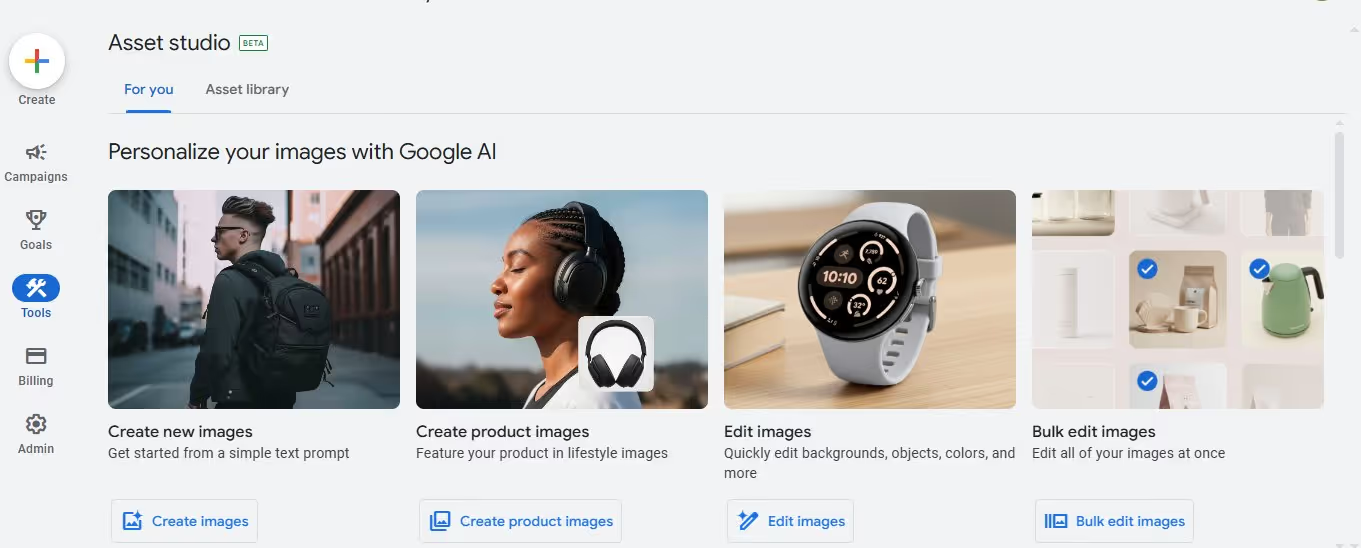

Google Asset Studio or Dataïads Smart Creative: which AI solution for creating e-commerce advertising visuals?

SEO, GEO and AI agents: the Push & Pull playbook to surface your products in 2026

Why most part of product feeds stay invisible to Google Shopping and AI agents

.svg)